Half of Americans’ Faces Are in FBI’s Facial Recognition Program

BY Dexter Tam

On March 22, the House Committee on Oversight and Government Reform held a hearing to review the Federal Bureau of Investigation's use of facial recognition technology and other programs.

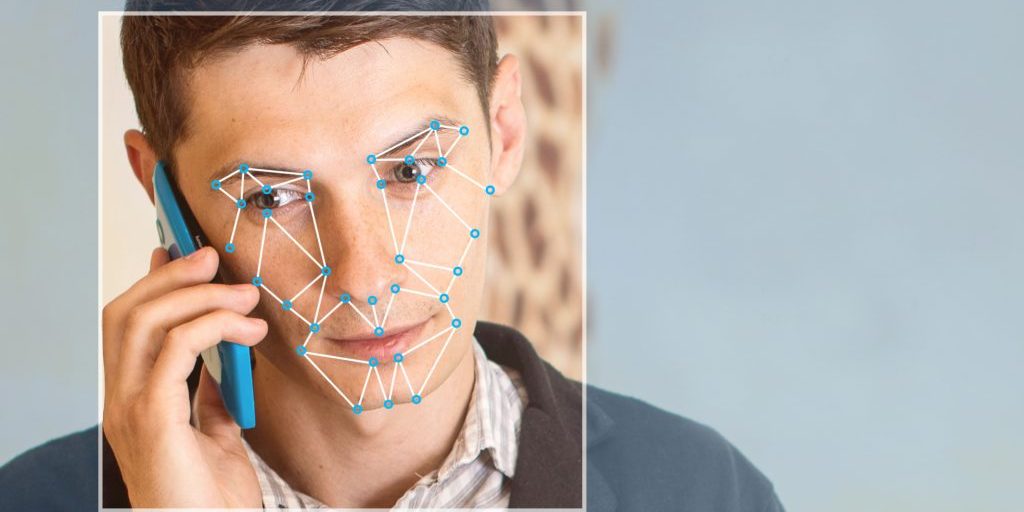

Facial recognition technology uses an algorithm to analyze a subject’s facial features, such as length and width of a person’s nose, eyes and ears. Law enforcement across the country have used this technology to ascertain whether the person they are analyzing is the potential suspect in their investigation.

The FBI has two facial recognition programs: Next Generation Identification Interstate Photo System (NGI-IPS) and Facial Analysis, Comparison, and Evaluation Services (FACE Services). Alvaro Bedoya, the Executive Director of Georgetown Law’s Center on Privacy & Technology stated during the hearing that the NGI-IPS program “hosts a database of 24.9 million mugshots.” The FACE Services has over 411.9 million photos, varying from driver’s licenses, passports or visas. These photos were given to the FBI by 18 states via a memorandum of understanding, a formal, non-legal binding agreement between two parties. The FBI will continue asking additional states for photo access.

A recently published study by Georgetown Law’s Center for Privacy & Technology titled, "The Perpetual Line-Up," states that 117 million, or 1 in 2 American adults are affected by having their photo in a facial recognition database.

A report by the Government Accountability Office was also brought up during the hearing, which revealed that the Department of Justice published a privacy impact assessment in 2008 for the NGI-IPs program. However, according to the report, the FBI failed to update the assessment after the program underwent “significant changes.” A privacy impact assessment is an audit that checks if the data collected is in line with regulatory policies and how potential risks can be managed.

House Oversight Committee Chairman Jason Chaffet (R-Utah) questioned Kimberly Del Greco, Deputy Assistant Director, Criminal Justice Information Services Division, on why the FBI failed to publish the privacy impact assessment for the FACE Services program publicly for years, yet still used facial recognition technology for real-world applications.

“You’re required by law to put out a privacy statement and you didn’t. And now we’re supposed to trust you with hundreds of millions of people’s faces in a system …” said Chaffet.

The privacy impact assessment for the NGI-IPS and FACE Services can be viewed here and here respectively.

Limitations and consequences of facial recognition technology

Del Greco stated in the hearing that, “The FBI has tested and verified that the NGI FR Solution returns the correct candidate a minimum of 85 percent of the time within the top 50 candidates.” This leaves a 15 percent chance of failure to properly recognize the correct suspect and even gives an opportunity for someone to be wrongfully accused.

Facial recognition algorithms are also less accurate when it comes to identifying dark skin tones. A study in 2012 titled, "Face Recognition Performance: Role of Demographic Information" found that facial recognition technology was 5-10 percent less accurate for African Americans than Caucasians. Given that African Americans are subjected to being arrested at a higher rate, their inclusion into additional facial recognition databases may cause more civil liberties to be violated. These violations could be wrongful arrest or investigation, or unsubstantiated future monitoring. The report from the Government Accountability Office states that the FBI has yet to audit its facial recognition system.

Privacy may also be in jeopardy if facial recognition technology is used in real-time. Facial recognition technology could be implemented in CCTVs to monitor for potential criminal activities, but may deter people from protesting or marching. Police officers use body cameras for transparency, but having them equipped with real time facial recognition could incite fear in people due to constant surveillance.

With facial recognition technology advancing and being unregulated, legal issues will soon surface, and courts will soon have to tackle these issues.

LATEST STORIES

MORE STORIES